In this article

Your product analytics are broken. We’re here to help.

For any online business, it’s increasingly critical for companies to understand their customers interests, behaviors, and experiences. To put it bluntly: understand your customers or someone else will. We have all this great technology, why are our product analytics so broken? Why is it so hard to find data we can rely on? Why is it so easy to make the wrong decision?

For any online business, it’s increasingly critical for companies to understand their customers interests, behaviors, and experiences. To put it bluntly: understand your customers or someone else will.

There are two major shifts that fuel this urgency for understanding customers; one of them is technical and the other is cultural.

The cultural shift is in our expectation of easy access to information; every person in the company should be able to answer their questions quickly, without having to rely on a data expert.

Until recently, stakeholders would request insights from isolated business intelligence teams, and get static reports in a couple of days, or weeks, or never, depending on the BI team’s workload and the complexity of the request.

Now, every person in the company, from growth managers to product owners, are expected to be able to answer their questions fast – without having to go through an expert – to act quickly and build better experiences for their customers.

These insights need to be quickly accessible through point-and-click user interfaces like Amplitude, Mixpanel, Looker, Mode, etc.

Today, the role of the best data teams is not to generate reports, but to support a culture of self-service analytics, providing easy-to-use analytics infrastructure, and more importantly, helping everyone ask the right questions, get the right answers, and make the best decisions based on data they can trust.

The technical shift is in the sheer volume of information and the granularity of data, that can enable us to move quicker, be more predictive, and make informed decisions that drive our business. Today, every important user interaction is logged to create a holistic picture of users’ experiences. Teams and tools build aggregates of those event streams to provide leading indicators of success, along with key insights which act as a compass to inform how our products need to change to best serve our customers.

So why are product analytics so broken?

We have all this great technology, why are our product analytics so broken? Why is it so hard to find data we can rely on? Why is it so easy to make the wrong decision?

Imagine you’re part of the Playlist team at Spotify. You want to make it easier for people to create and use playlists. Your metric for success is the conversion of people creating playlists, adding more than 5 songs, and playing it. As an experiment to increase that conversion you’re adding the ‘+’ button to more locations in the app.

Now imagine you’re an iOS developer on this team. When making this change you’re updating code for a subset of the roughly nine locations in the iOS app where a user can add songs to playlists (the song fullscreen view, the album view, the queue view, etc). Behind these app locations there could be at least nine different code paths. Each of those code paths needs to have an analytics event logging call. Failing to ensure that means we won’t be able to monitor the metric we set out to change.

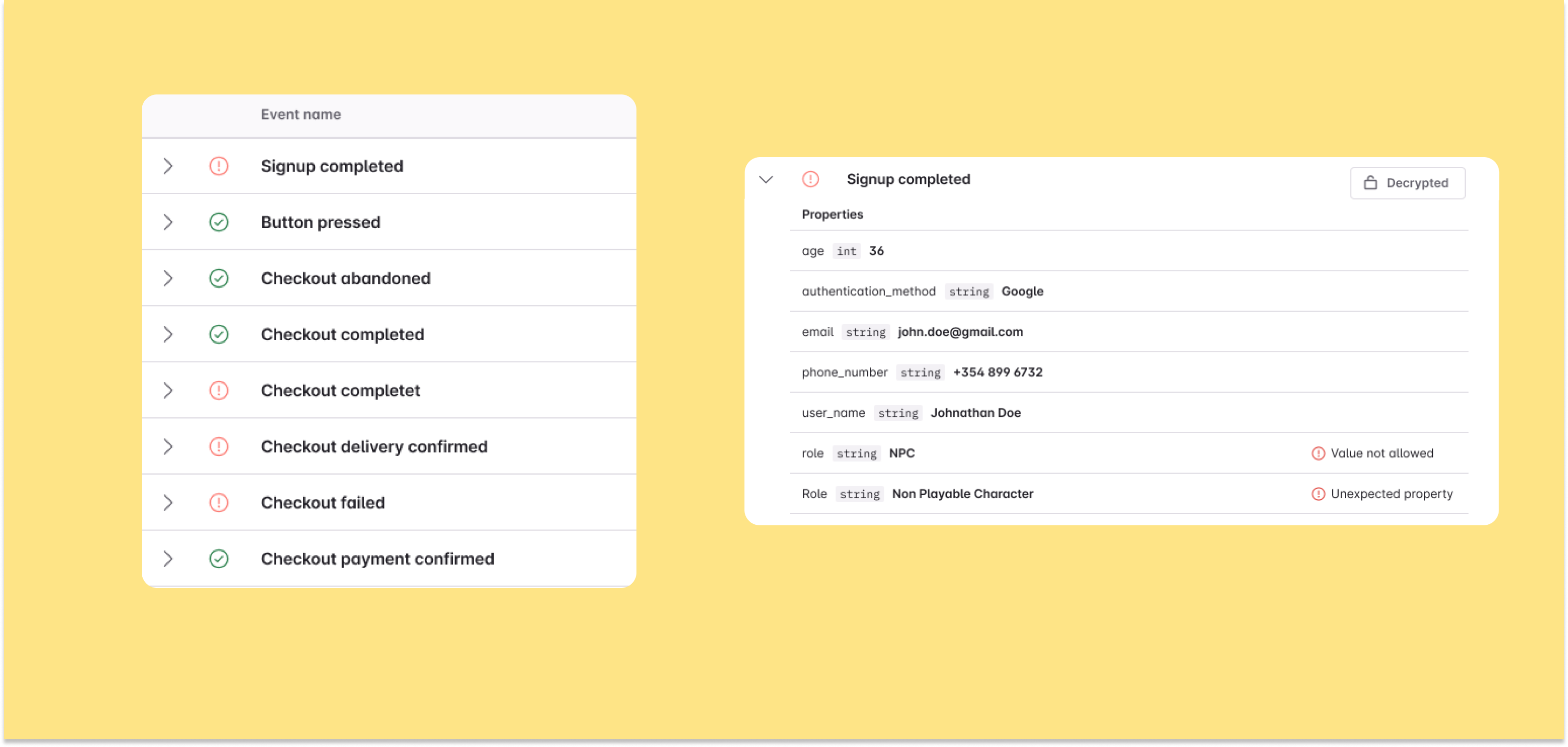

The “right” name for the user interaction is not likely to be centrally documented, leaving the naming work to each developer. Since (1) these different code paths are typically not written at the same time; and (2) they are typically not written by the same developer, in practice we usually end up with a flurry of various different names for the same user interaction:

But that sounds fine, right? Anyone who will look at that will be able to roughly guess which user interactions they refer to, right? Of course not. These are just a handful of event names in a sea of similar sounding names. You mostly can’t guess what exact user action these names refer to, which makes this mess of different names very misleading.

Let’s jump into another hypothetical role at Spotify. Imagine you are a product manager on their playlist team.

As the feature update rolls out, you’re trying to determine whether the team has managed to make it easier to create and use playlists. What does that conversion we set out to impact look like, from creating a playlist, adding more than 5 songs, and playing it?

You start digging into the data. You find three different user interactions that appear to represent adding songs to a playlist. Which one is it? You start distrusting the data and even yourself to extract the true results from this data. So you reach out to your data scientist for help.

Boom. All of the sudden you’re back to the last decade. You need to go through a data expert to get answers to your questions. The bad event logging has resulted in blocking folks from being able to self-serve analytics and insights. Information is no longer readily available to anyone who needs it.

Now, imagine being a data expert at Spotify.

You start digging around your data, trying to figure out how many different event names there actually are. You discover 23 different types. (Yes, I know, there were only nine app locations to add a song to a playlist, but that was only the iOS app. There’s more on Android, Web, Desktop, Apple TV, ...).

Next, you start building a huge SQL join query to join the 23 event tables. But, not only do the names of the user interactions not match, the metadata doesn’t match either, containing important context such as the song name, artist name, playlist name, and so on.

You try to join the songName on the addToPlaylist event with song_name on the song_added event. (Then you find a field called “name,” and think, “Wait, is this artist name, user name, or song name? Let me check a few of the values to confirm… Okay, artist name – skipping that field here in the song name then”. But, this is another story about using unique property names when building analytics taxonomies, and we’ll save that for another post).

The probability that you’ll get this join query right is slim to none. But, for the sake of this hypothetical, let’s say you spend a day or two on it and end up confident that you actually got it right. Except, a couple of days later, Android releases a new app version, where the 24th version of the user action gets created. 🤦♀️😭 (And, in the most likely scenario, we won’t discover that for a few more weeks, which means our analytics will be skewed.)

But, let’s jump back to the product manager. You’re happy you managed to get time from the data scientist to hack this together. You immediately have follow-up questions to decide the next steps. Unfortunately, the data expert is already knee deep in some other data request , so you won’t receive an answer to your follow-up question.

Another blow. You’re back in the last decade again, bottlenecked by the throughput of data scientists.

This is not the only feature and metric you are working on. You’re responsible for understanding the holistic user experience: what works, what doesn’t, how users typically interact with the product, what good engagement looks like, and so on. So the above problem is not limited to a single user interaction.

You have this problem for 647 different types of user interactions:

- Song Played

- Account Created

- Song Added to Playlist

- Playlist Created

- ...

Every time you want to make a product decision, you need an analyst or a data engineer to review the data and make sure that it’s correct. You have data, but it’s unreliable, inconsistent, and requires hands-on help from an expert. At first this just slows you down, but over time you just give up. You stop asking questions. You no longer make data-driven decisions.

Your product analytics are broken. And we’re here to help.

We’ll be sharing more on this blog about how Avo can help you get back to that dream state -- where everyone has access to reliable analytics, can make data-driven decisions, and drives growth. We’re in this together, and we can’t wait to show you how.

Block Quote