In this article

Detect spikes in your event data with the Avo Audit dbt package

Avo Audit package is live! Audits for event volume anomalies, with macros for more types of issues coming soon.

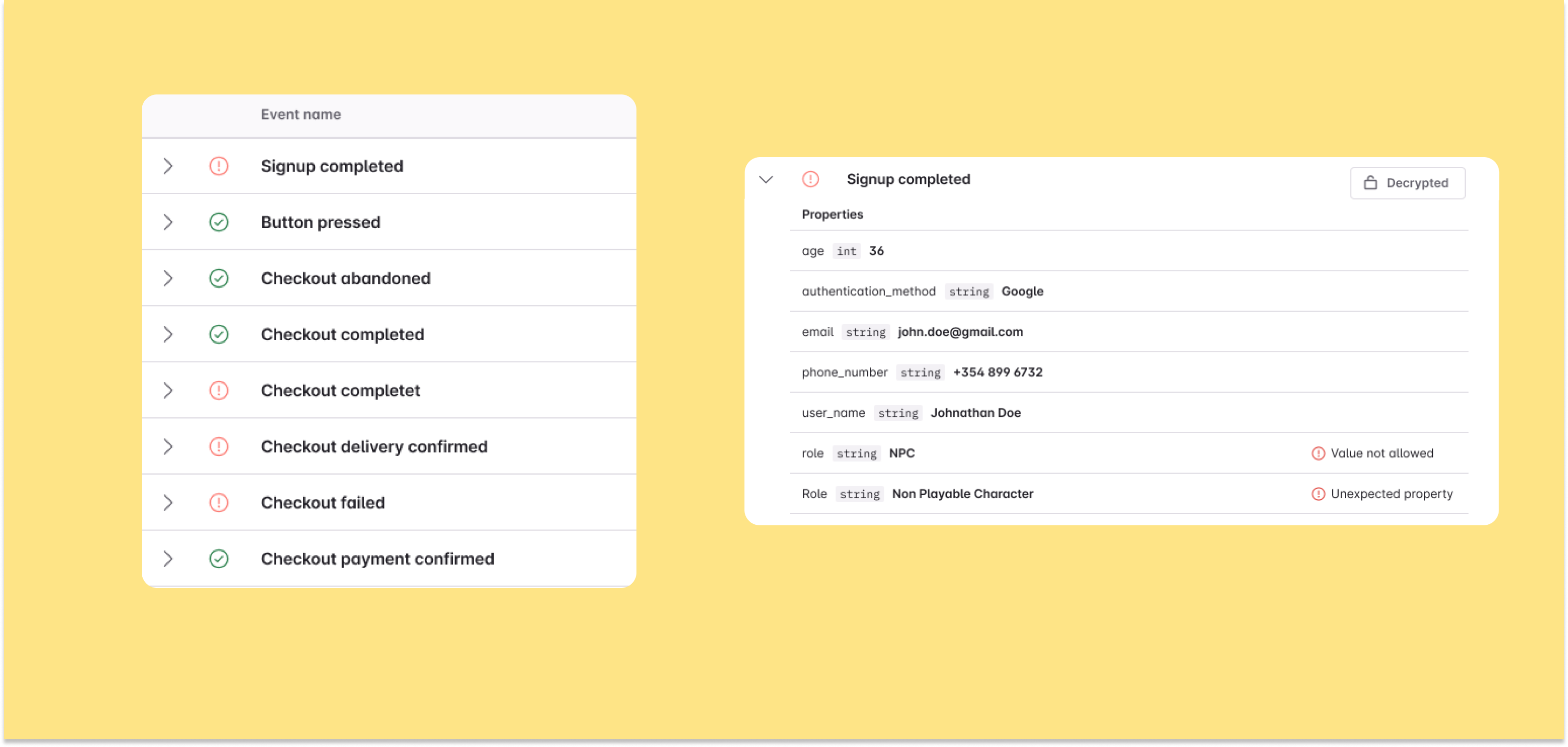

Avo has been building tools for tracking plan management, collaboration and validation for 4 years now – and we’ve learned a lot along the way. During that journey we built the Inspector, which is an observability tool that identifies issues in your analytics calls. While that has proven incredibly valuable for a lot of our customers, we’ve found that many teams rely on the analytics data stored in their data warehouse.

After doing some further research, we found that teams are experiencing the same issues that Inspector surfaces in their data warehouses – and more. That’s why we built the Avo Audit dbt package. It is open source and free to use, and is focused on raw data audits in your warehouse. Version 1.0.0 includes audits for event volume anomalies, with macros for more types of issues coming soon.

Below you can find examples of how we recommend using the macros.

Macro detect_event_anomaly

detect_event_anomaly is intended to be used as an investigative tool or as a CI test. It is designed to be run against a big table with all your raw events.

If your events are in separate tables, we created a helper macro to join them together into a single table before running the test.

detect_event_anomaly as a model

The detect_event_anomaly macro can also be set up as a model to run against your table to see the output properly. This is something that we recommend doing if the tests start failing, then running it as a model gives insight into when the anomaly started and the severity of the difference between days.

To see an example of how the output looks like when there is a sudden drop in event volume, we will start be seeding the database with the following CSV as test data

data/event_data.csv: Event Data table

Then create a model called event_anomaly_table.sql and copy the following snippet

Then run the following commands:

$ dbt seed

$ dbt run -m event_anomaly_table

Resulting table would look like this if there are any anomalies (BigQuery):

Signal can be one of three values; 1 (a spike), 0 (no anomaly) or -1 (a drop)

In this example we compare the volume of the Navigated event sent from Web for each day against the total event volume. For the comparison we use the avg_percentage and std_percentage to decide if the spike or drop is high enough to be counted as an anomaly.

Credit for algorithm:

Brakel, J.P.G. van (2014). "Robust peak detection algorithm using z-scores". Stack Overflow.

Available at: https://stackoverflow.com/questions/22583391/peak-signal-detection-in-realtime-timeseries-data/22640362#22640362 (version: 2020-11-08).

detect_event_anomaly as a test

Setting this macro up as a test is recommended and it will by default run against your data from the past 15 days, checking if any anomalies occurred in that time period. The time period can be adjusted with additional parameters.

Example of Dbt test failure

Let's set up a test using the macro that will fail. We will use the same csv file to seed the database.

data/event_data.csv: Event Data table

Create the following files:

Required input variables for the macro are:

name: <Name of the table containing raw events>

event_name_column: <column name for event name>

event_date_column: <column name for event date>

event_source_column: <column name for event source>

With these files set up, we can run the following commands

$ dbt seed

$ dbt run -m anomaly_test

$ dbt test

After running the test we see this error:

Completed with 1 error and 0 warnings:

Failure in test avo_audit_detect_event_count_anomaly_test(models/anomaly_test.yml)

Got 7 results, configured to fail if != 0

Now we have connected anomaly detection with our seeded table, with a test that errors if any rows are returned.

You can now set this up against your raw events table and use Slack alerts to notify you immediately if the test starts failing

We hope that you find Avo Audit useful and we’re excited to hear any feedback or thoughts. Please don’t hesitate to contact us via email, in our #dbt in Right Track Slack or Post an Issue on Github

Block Quote