In this article

Tracking the right product metrics

Best practices: Be more efficient when shipping product with reliable data. Track the right product metrics by aligning stakeholders on the purpose of a product release. Find the highest-impact lowest-effort tracking to understand its success – for every feature release.

At Avo, we receive a number of requests to recommend best practices. In this first post in our best practices series I’d like to tell you about the Purpose Meeting, a great platform to find and prioritize the highest-impact lowest-effort tracking to understand the success for every feature release.

Meet the Purpose Meeting

purpose meeting

/ˈpəːpəs ˈmiːtɪŋ/

noun

A platform to align stakeholders on the purpose of a product release, and map out the highest-impact lowest-effort tracking to understand its success.

30 minute meeting where stakeholders go through these 3 steps:

We developed the Purpose Meeting at QuizUp, to make our data better. Before every feature release, we’d gather the stakeholders for 30 minutes – an engineer from each development platform (iOS, Android, web, backend), a PM, a designer, a data specialist – and we’d go through these steps ☝️

The impact of doing this for every feature release went way beyond ensuring we had the highest-impact-lowest-effort tracking in place to understand the success of our releases. It turned out to be a fundamentally helpful process to ensure alignment on why we were shipping what we were shipping.

Purpose meetings revolutionized engineering buy-in for analytics at QuizUp. Engineers would request purpose meetings before they kicked off feature development because it made their work more efficient and purposeful. With analytics defined before writing any feature code, iOS and Android would always be in sync. It eradicated the need to refactor code to add analytics after-the-fact. Analytics went from being a “chore” that was done “for the data team” and “not for the end user”, to something that devs were interested in to understand how successful their release was. The developer conversion from looking up release metrics in Amplitude sky rocketed – from a couple of developers, to the majority of developers being curious.

Your data is a product. It has stakeholders.

Your data is a product. It should be considered from usability, market, business viability and engineering feasibility, just like other products. The stakeholders for these four pillars are:

Data consumers: Typically Product Managers and Data Scientists, who consume data to build insights and make decisions.

Data producers: Typically Product Developers who add tracking calls to the product code base to produce data for the consumers.

To get the most out of your data, you should align these stakeholders, and agree on what data is important and attainable. If you don’t, you won’t be able to prioritize your tracking based on impact and effort.

Purpose Meeting: Deciding the highest impact lowest effort tracking

1. Align on goals

The first step is knowing the reason for your release. Define your goals by asking yourselves questions like:

- What are we trying to help our users accomplish?

- What is the problem that we are trying to solve?

- Who are we solving it for?

- What does success look like?

Iterate and discuss your goal until you have clarity and alignment for all stakeholders.

A good goal aligns us on the problem we want to solve for our users and what value that brings them. A bad goal is framed only from our own business perspective.

A good goal supports our other goals. A bad goal creates ambiguity around what’s most important.

A good goal encompasses that problem we want to solve. A bad goal leads us to a local maxima by focusing only on a part of the problem.

A good goal is specific enough that it helps us make better decisions for the release. A bad goal leads us to want to expand our work out of scope by being too broad.

A good goal is one that all stakeholders can align behind. If you get the right group of people together, the room (or... ehh... Zoom…) will develop great ideas together. A bad goal decreases clarity, muddies up the release missions and obscures the goal of the release.

2. Commit to metrics

Next up is to commit to how you will measure the success of your goal. You can do this by asking questions like:

- How will we know if this goal is successful?

- What steps will the user need to go through to achieve the goal?

- Are there likely user journey drop-offs or dead-ends that we’d want to detect?

It can be very helpful to think through what our success report and narrative will look like, because it clarifies requirements for the type of data we will need, which helps us design the relevant data in step 3:

- How will we measure the impact of the release? Compare cohorts of users? A/B test the release? Change over time?

- What is a relevant metric to measure the success of our users?

- Is it the conversion through a set of steps? E.g. open the app and choose a song to play.

- Is it the total count of something? E.g. count of users who play a song per day, or just total number songs played per day?

- Is it a rate? E.g. day one retention, or checkout conversion.

- How do we visualize these? Time series? Bar chart with conversion funnel?

- How do we visualize the comparison? Bar per group? Line per group?

Iterate on your metric until stakeholders agree that it would help determine the positive or negative impact of the release:

A good metric has clear definitions. A bad metric is vague. For example “create a playlist and add at least 5 songs to it”, vs “use playlists”. Or for example, what defines “active” users? Is it enough to open the app or do they need to play a song? Are we talking about daily, weekly or monthly “active”?

A good metric has a way to be understood and visualized. A bad metric has multiple ways of interpretation.

A good metric will keep us aligned on whether the release was a success or not. A bad metric will leave ambiguity over the success. For example “median # songs per playlist” would be bad to measure the success of increasing conversion to adding songs to playlists. Why? Because it’s likely that the number of users who add songs to playlists will go up, which will negatively impact the metric we chose.

This exercise will surface a set of metrics. It’s a good exercise, and will help clarify your success criteria. However, it’s important to prioritize them. You should choose the simplest metric that you’re willing to rely on to determine whether your release was a success or a failure. It will a) align everyone on the most important needle to move, and b) ensure you prioritize and ship the highest-impact lowest-effort tracking when the time comes to cut the analytics scope due to lack of time until release.

3. Design data

Finally, it’s time to design the data. Here we use the metrics we committed to as guiding light. Our remaining job is to fill the blanks with the analytics events and properties we’ll need. It’s fun. Like a puzzle. You’ll want to ask questions like:

- Which user journey steps do we need to log to visualize this rate?

- What properties will we need to segment or filter our data by?

Good data will be useful as-is. Bad data will require the data consumer to ask for engineering time and post-processing. For example, if you’re sending data into Amplitude, don’t assume it’s enough to have the “song id”, because an ID means very little to the data consumer in Amplitude. It will require a data expert to export the data to perform SQL gymnastics on it to enrich it with the song name or artist name. Which means you’ve killed your self serve analytics.

Good data gives insight into important user interactions, and the context around that action. Bad data requires you to wade through random events and properties that were “tracked just because we can” or because you think “one day we might have a question related to that data”.

Good data has nomenclature and granularity level that will scale as your tracking needs grow. Bad data is short sighted and becomes messy fast. For example, bad taxonomies have both “game” and “match” to refer to a game. Or for example, if you want to specifically monitor whether they were playing their first song, instead of adding a boolean “is first song” property, you might want to have that property by numerical. “num songs played”

Implement analytics and ship product with good product analytics

Once you've designed relevant analytics, the final step is shipping your product with these analytics in place:

- Document the event names in a single source of truth. Typically this is done in spreadsheets or json files hosted on GitHub. This will ensure consistency across code paths and product platforms (iOS, Android, Web, backend)

- Document metric decisions in context with the event names. This context will minimize mis-aligned implementation across platforms (e.g. different timings on Android vs iOS).

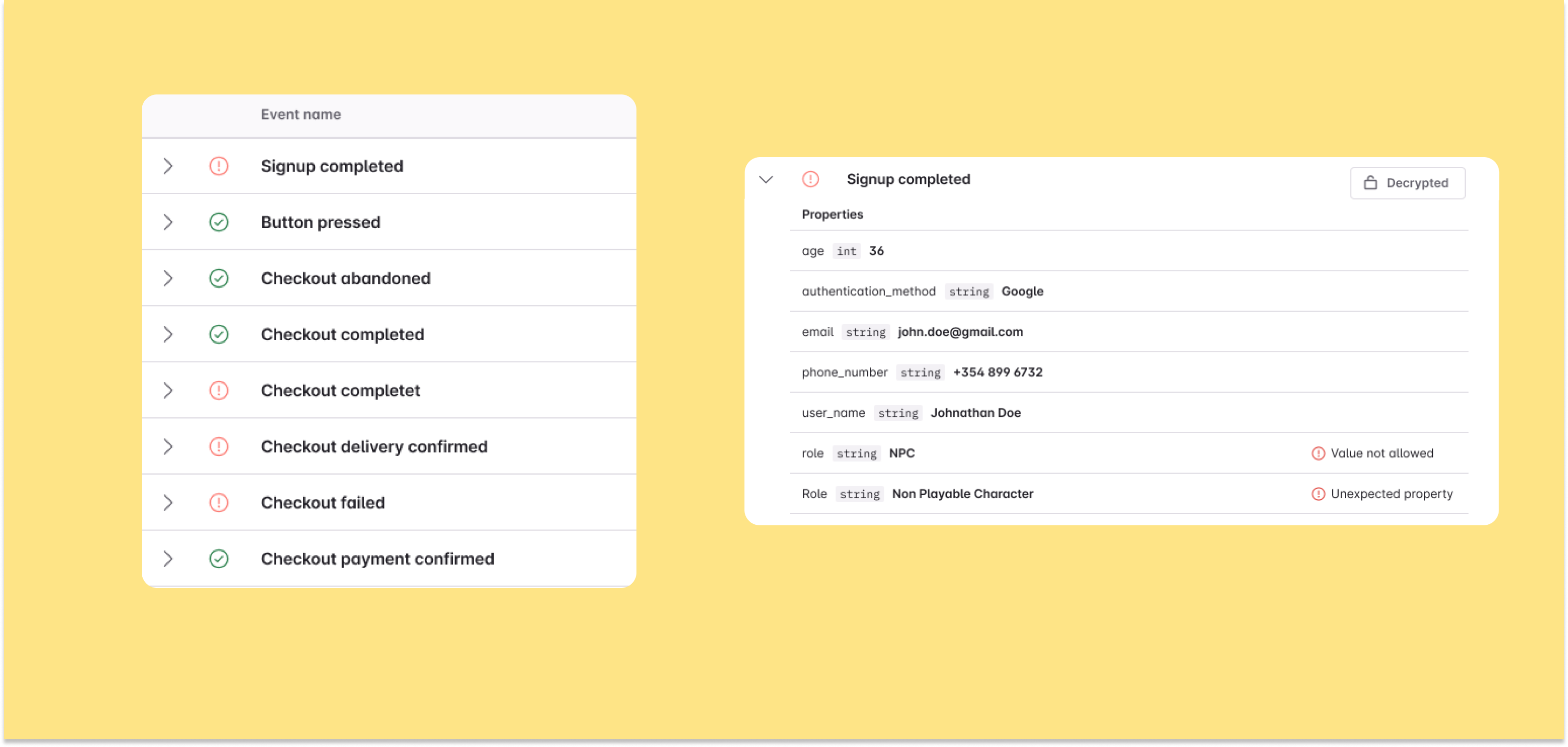

- Test the analytics events before releasing. It's very very easy to make human errors in the implementation process, with tracking code typically not being type safe or tested in other ways.

Finally, you can build the charts for your metrics once the product is out, and monitor the success of your release.

Product is a team sport. I recommend scheduling a review session with your team once the feature has been out for a few days or a couple of weeks, to sit down, review success, and identify improvement opportunities.

Tracking the right product metrics

We’d love to hear from you. What framework do you use that’s similar to the purpose meeting? Do you have any additional tips?

We'll be sharing more on this blog about best practices in product analytics and how Avo can help you manage that dream state -- where everyone has access to reliable analytics, can make data-driven decisions to drive growth. We’re in this together, and we can’t wait to show you how.

Block Quote